Efficient Animal Behavior Analysis and Video Summarization via Gaze Target Estimation Models

Abstract

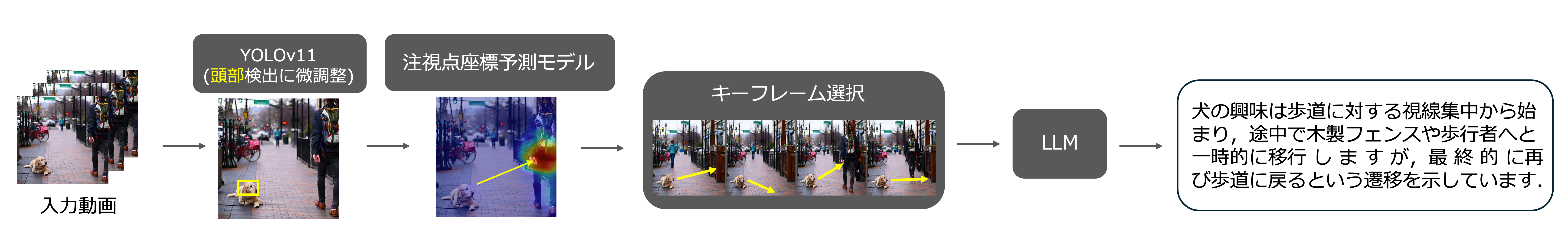

I propose a method to improve the efficiency of video analysis using large-scale language models (LLM) by using animal (dog) gaze as an indicator. First, I developed a highly accurate gaze estimation model with DINOv2 as a backbone using a custom-built dog dataset of approximately 5,000 images. Next, I applied spatiotemporal clustering to the estimated gaze coordinates to identify and extract frames where the animal focused its attention, identifying semantic turning points. I demonstrated that this adaptive sampling enables advanced summarization that accurately preserves behavioral context while reducing costs.

Paper

Video

Material

Citation

-

Suguru Takahashi

Efficient Animal Behavior Analysis and Video Summarization via Gaze Target Estimation Models

Master Thesis, February 2026